Quick Summary

| Key Insight | What You Need to Know |

|---|---|

| Fostering Positive Engagement | By pinning great user comments or jumping into constructive conversations, they help build a vibrant and supportive community around your brand. |

| Upholding Community Guidelines | They consistently enforce your brand’s rules of conduct. This ensures fairness and sets clear expectations for how people should behave in your space. |

| Legal Team | For things like defamation, intellectual property theft, or threats of a lawsuit. |

| PR/Communications | For a sudden wave of negative comments or a story that’s about to break. |

| Customer Service | For complex product complaints that need an expert to step in. |

A social media content moderator is far more than just a comment deleter. Think of them as the strategic guardians of a brand's entire online community and reputation. Their main job is to make sure your digital spaces stay safe, constructive, and true to your brand's values by keeping a close watch on user-generated content and taking action when needed.

The Unseen Guardians of Brand Reputation

I like to think of a good moderator as the digital equivalent of a city planner. A city planner designs public squares to be safe and encourage positive community interactions. In the same way, a moderator cultivates a brand’s online spaces—its comment sections, forums, and groups—to do exactly that. They are your frontline defense against the chaos that can quickly swamp an unmanaged social media presence.

This isn't a passive role. These professionals are actively shaping the user experience, which has a massive impact on how customers see your brand. Their work is a constant balancing act between enforcement and engagement. They’re tasked with removing harmful content, but at the same time, they're looking for opportunities to nurture positive discussions, answer questions, and build a truly loyal community.

More Than Just Deleting Comments

The day-to-day of a social media content moderator goes way beyond just hitting "delete" on a nasty comment. Their real strategic value comes from a much wider range of activities that protect and grow a brand’s digital footprint.

Here’s what they’re really doing:

- Protecting Brand Safety: They act as a shield, protecting your community from spam, hate speech, misinformation, and harassment. This creates a secure environment where your real followers feel comfortable participating.

- Fostering Positive Engagement: By pinning great user comments or jumping into constructive conversations, they help build a vibrant and supportive community around your brand.

- Upholding Community Guidelines: They consistently enforce your brand’s rules of conduct. This ensures fairness and sets clear expectations for how people should behave in your space.

- Gathering User Insights: Moderators have their finger on the pulse of customer sentiment. They spot common complaints, popular feature requests, and emerging trends that can be pure gold for your business strategy.

A brand’s comment section is its digital storefront window. A moderator’s job is to keep it clean, welcoming, and safe for everyone, ensuring that the first impression is always a positive one.

Ultimately, effective moderation isn’t optional for any serious social media strategy. It's not a cost; it's a proactive investment in your brand's health. The work of a skilled social media content moderator directly influences customer loyalty, public perception, and even your bottom line.

To see how this fits into the bigger picture, you can dive into our complete guide to online reputation management. By stopping crises before they start and nurturing a healthy community, moderators build the foundation for sustainable growth and a resilient brand. It's this crucial, often invisible, work that turns a simple social media page into a thriving, valuable brand asset.

The Modern Moderation Partnership: Human Insight and AI Speed

Let's be honest: trying to manually moderate every single comment on a busy social media page is like trying to catch raindrops in a storm. It’s just not possible. The sheer volume of content today has made a new approach necessary, leading to a powerful team-up: the judgment of a human social media content moderator combined with the incredible speed of artificial intelligence.

This isn’t about humans vs. machines. It's a strategic partnership where each side brings something essential to the table. Think of AI as your first line of defense—a tireless guard scanning millions of comments in the blink of an eye.

AI excels at catching the low-hanging fruit. It can instantly flag and remove obvious spam links, known hate speech, or graphic content, often before anyone even sees it. This initial sweep is what keeps a high-traffic community from becoming a total mess.

Where Human Judgment Is Irreplaceable

But for all its speed, AI lacks one critical thing: wisdom. The internet runs on nuance, sarcasm, and inside jokes that constantly change, and this is where even the smartest algorithms can get completely lost.

A human moderator knows that a comment like "this new design is fire" is a huge compliment, not a threat. AI, on the other hand, might just see the word "fire" and flag it. It's this ability to understand context and intent that makes a person indispensable.

The best moderation strategies don’t replace human intuition; they amplify it. AI handles the noise, freeing up your expert moderators to focus on the tricky cases that truly define your community’s culture.

This human touch is what builds trust. An overly aggressive AI can create "false positives," deleting perfectly fine comments and frustrating your most loyal fans. A person can make the fair, context-aware calls that keep your community healthy and engaged.

The Power of a Combined Approach

Pairing human skill with AI efficiency creates a workflow that is both smart and scalable. It's no wonder this hybrid model is becoming the industry standard and driving major growth. The global content moderation market is actually projected to hit around $15 billion by 2025, largely because companies are adopting AI to support, not replace, their human teams. You can dive deeper into the growth of the content moderation industry here.

So, how do the two approaches really stack up against each other? The table below breaks it down.

Comparing Human Moderators and AI Moderation Systems

This side-by-side comparison shows exactly where each approach shines and where it falls short, making it clear why a combination of the two is often the best path forward.

| Attribute | Human Moderator | AI Moderation System |

|---|---|---|

| Speed & Scale | Limited; can only review one piece of content at a time. | Massive; can process thousands of items per second, 24/7. |

| Nuance & Context | High; excels at understanding sarcasm, cultural jokes, and intent. | Low; often struggles with context and can misinterpret slang or irony. |

| Adaptability | High; can quickly learn new community norms and evolving language. | Low; requires extensive retraining with new data to adapt. |

| Consistency | Can vary; subject to fatigue, bias, or different interpretations. | High; applies the exact same rules consistently every single time. |

| Cost | Higher operational cost per moderator. | Lower cost at scale, but with significant initial setup costs. |

As you can see, neither one is a complete solution on its own. A brand that relies only on AI will inevitably make context-blind mistakes that alienate its audience. On the flip side, a brand using only people will quickly get overwhelmed, leading to slow responses and letting harmful content slip through the cracks.

The ideal solution is a seamless partnership. AI systems do the initial heavy lifting, flagging potential problems and dealing with the obvious spam. This filtered, more manageable queue is then passed to a human social media content moderator, who applies critical thinking to the gray areas. For those looking to set up this kind of system, it's worth exploring the best AI comment moderation tools for 2025 to see what's possible.

This synergy gives your brand the best of both worlds: machine-level speed and human-level understanding, creating a safer and more accurately managed online community.

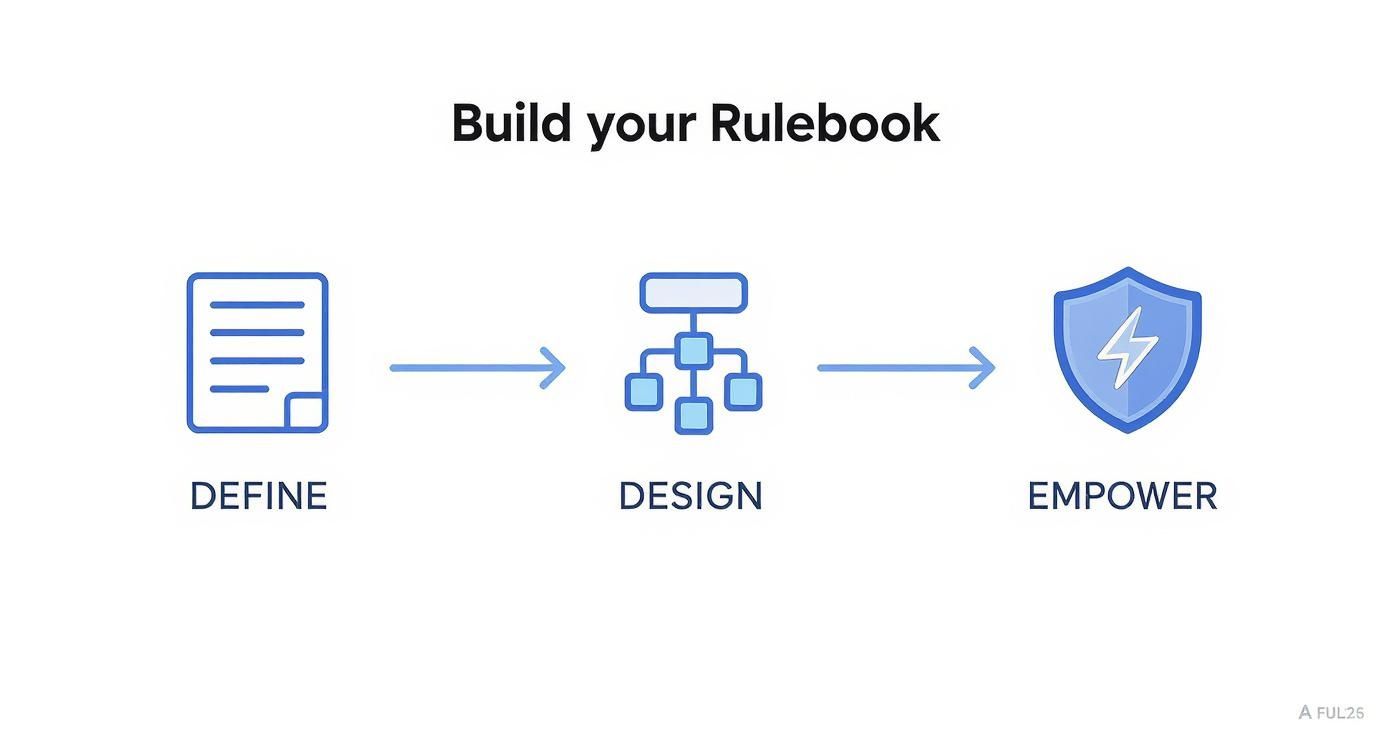

Building Your Brand's Digital Rulebook

Think of your moderation policy as the constitution for your online community. It's the bedrock for every decision your social media content moderator makes, ensuring every action is consistent, fair, and defensible. Without it, you're essentially letting individual moderators make subjective calls on the fly.

That kind of inconsistency is a recipe for disaster. One moderator might delete a comment that another would leave up, creating confusion and opening the door to accusations of bias. A solid, well-thought-out rulebook removes that guesswork. It's the single source of truth that keeps your brand's voice coherent and your online spaces safe.

This document isn’t just a list of "don'ts." It's a blueprint for the kind of community you want to cultivate. It sets the tone, manages expectations, and ultimately protects both your audience and your brand from unnecessary headaches.

Core Components of a Strong Moderation Policy

A truly useful policy gets into the nitty-gritty. Vague mission statements won't cut it when a moderator has seconds to decide on a comment. Your rulebook needs to draw clear lines in the sand, tailored specifically to your audience and industry.

Here are the pillars every strong policy is built on:

- Defining Violations with Examples: Don't just forbid "harassment." Show what it looks like. Give concrete examples, like targeted insults based on identity, sharing someone’s private information (doxxing), or spamming a user with unwanted mentions.

- Spam and Self-Promotion Rules: Get specific. Can users share their own blog posts? Are affiliate links okay? Lay out exactly what you consider spam, from posting irrelevant links to dropping the same commercial message over and over.

- Off-Topic Content Guidelines: Decide how you'll handle comments that derail the conversation. This helps keep discussions focused and prevents your comment sections from turning into a free-for-all on unrelated, often heated topics.

- Brand-Specific Nuances: Every brand has its own quirks. A financial services company, for instance, will need much stricter rules about users giving unlicensed financial advice. A gaming community might have a zero-tolerance policy for spoilers.

Your policy should be a living document. Online slang, memes, and community norms change constantly. Revisit and update your guidelines regularly to keep them relevant.

Don't hide this document away. Making your guidelines public is a massive step toward building trust. When people know the rules and see them applied fairly, they’re far more likely to contribute positively. It shifts the perception of moderation from a shadowy enforcement arm to a shared responsibility for keeping the community healthy.

Designing a Clear Escalation Workflow

Let's be real: not every comment is a simple "delete" or "approve" decision. You're going to run into gray areas, borderline comments, or the first signs of a full-blown brand crisis. That's where a clear escalation workflow comes in.

Think of it as a decision tree for your moderation team. It maps out exactly who to loop in and when, so a single moderator never has to carry the weight of a high-stakes decision alone. It gives them the confidence to act quickly because they have a clear protocol to fall back on.

A standard workflow might look something like this:

- Initial Triage: A moderator flags a comment that feels off but doesn't fit neatly into a violation category.

- Consultation: They ping a senior moderator or team lead for a second look, pointing to the specific rule that's in question.

- Departmental Escalation: If things get serious—like a legal threat or a potential PR nightmare—the workflow tells the moderator exactly who to notify.

- Legal Team: For things like defamation, intellectual property theft, or threats of a lawsuit.

- PR/Communications: For a sudden wave of negative comments or a story that’s about to break.

- Customer Service: For complex product complaints that need an expert to step in.

- Resolution and Documentation: After a decision is made, the action and the reasoning behind it are logged. This paper trail is invaluable for training new moderators and refining your policy over time.

Having this structure in place means critical issues get to the right people fast, turning what could be a crisis into a managed situation.

A Day in the Life of a Social Media Moderator

Want to know what it’s really like to be a social media content moderator? Let's walk through a typical day. Picture this: your brand just dropped a hot new product. The announcement hits social media at 9 AM sharp, and the floodgates don't just open—they burst. A moderator's day starts not with a gentle trickle of comments, but a full-on tidal wave of user-generated content.

This isn't just a chaotic scramble to delete nasty comments. It's a structured, methodical process designed to handle a massive volume of interactions with incredible precision. The journey of a single comment, from the moment a user hits "post" to the final action taken, follows a clear and deliberate path. This system is what separates professional moderation from just casually checking your brand’s comments.

And the scale of this job can be staggering. One report found that moderators managed 118.4 million comments for over 450 brands in just one year. During that time, they had to hide about one out of every six comments—a stark reminder of how much spam and junk gets thrown at brands every single day. You can get a deeper look at the numbers in the 2025 Social Media Comment Insights Report.

The Content Moderation Workflow in Action

So, let's follow a few comments from that product launch we imagined. The lifecycle of each comment moves through a few key stages, mixing the raw speed of AI with the careful judgment of a human to keep the online space healthy and on-topic.

Ingestion: This is ground zero. Every single comment, reply, and mention gets pulled into one central dashboard. Think of it as a raw, unfiltered feed of everything—excited customers, spam bots, legitimate complaints, and a few trolls for good measure.

Triage: Here's where AI gets its first pass. An AI-powered system like FeedGuardians instantly scans the entire incoming flow. A comment with a sketchy-looking link? Automatically flagged as spam. Another one containing a racial slur? Immediately hidden based on the rules you’ve set. This initial, automated triage handles the obvious junk in milliseconds.

Review: Now, the human moderator steps in. Their queue is already much cleaner, filled only with the comments that need a bit more thought. They see a positive post ("Just ordered mine, can't wait!"), which they might decide to pin to the top. Next up is a frustrated customer ("My discount code isn't working! Help!"). This isn't a violation, but it's a customer service fire that needs putting out.

Action: The moderator makes a call based on the brand's rulebook. The positive comment stays up. The spam comment stays hidden. For that frustrated customer, the moderator might tag a customer service agent to jump in and solve the problem right there in the comments, turning a bad experience into a public win for the brand.

Reporting: Every action is logged. This data is pure gold for fine-tuning the moderation strategy. The moderator might notice a spike in complaints about discount codes, which becomes valuable feedback for the marketing team to fix a broken campaign.

This whole workflow shows how a moderator brings a brand’s digital rulebook to life, creating a safer, more constructive community.

As you can see, the real goal isn't just to zap bad content. It's to strategically manage the entire conversation.

Navigating the Human Element

Beyond the nuts and bolts of the workflow, a massive part of a moderator’s job is handling the emotional and psychological weight of it all. They are on the front lines, seeing both the best and worst of what people have to say online, and that requires a tough skin and a lot of empathy.

A great social media content moderator isn’t just a rule enforcer; they are a community psychologist, a brand diplomat, and a crisis manager all rolled into one. They read the room, de-escalate tension, and know when to step in versus when to let the community self-regulate.

Let's go back to our product launch and look at a few real-world examples:

- The Troll: A user drops a deliberately inflammatory, off-topic political comment trying to start a fight. The moderator has to decide, fast: hide the comment and ban the user, or leave it and risk the entire post getting derailed?

- The Sarcastic Critic: Someone posts, "Wow, another product that will probably break in a week. Great job." An AI might miss the sarcasm, but a human moderator instantly recognizes it as negative feedback that needs an answer, not just deletion. They might reply with a link to the product’s warranty.

- The Enthusiast: A loyal fan shares their own unboxing video in the comments. The moderator spots this as awesome user-generated content and asks for permission to feature it on the brand's main page.

Every one of these situations requires sharp critical thinking and a solid grasp of the brand's voice. A moderator isn’t just clicking buttons; they're making hundreds of tiny decisions that, all together, shape how people feel about the brand and the community. It’s a job that demands immense emotional labor and is far more complex than most people realize.

You can't fix what you don't measure. For any social media content moderator or their manager, showing the value of their work means going way beyond just saying they "cleaned up the comments." Success in moderation is something you can actually count, and those numbers prove its direct impact on everything from community health and brand safety to the company's bottom line.

To do this right, you need a smart mix of operational and strategic Key Performance Indicators (KPIs). These aren't just numbers for a spreadsheet; they tell a clear, data-backed story about your moderation efforts. When you track the right data, you can justify your team's budget, fine-tune your process, and show a tangible return on investment.

This isn’t about vanity metrics, like how many comments you deleted last week. It’s about measuring the real-world speed, accuracy, and overall health of your online community.

Key Operational KPIs for Moderators

Operational KPIs are all about the nitty-gritty—the efficiency and effectiveness of the moderation process itself. Think of them as the metrics for the engine room. They tell you how well your team or your system is handling its core tasks, day in and day out, and they’re essential for spotting problems and managing your team.

A few key operational metrics to watch are:

- Time to Action (TTA): This is simply the average time it takes a moderator to deal with a flagged piece of content. A lower TTA is always better because it means nasty comments disappear faster, limiting the damage they can do.

- Accuracy Rate: What percentage of the time does a moderator make the right call, based on your own rulebook? A high accuracy rate—we’re talking 95% or higher—is the gold standard. It proves your rules are being applied fairly and consistently.

- Moderator Activity: This is a simple volume metric, tracking how many actions (hides, deletes, escalations) a moderator gets through per hour or day. It's not a direct measure of quality, but it's incredibly useful for planning resources and making sure no one on your team is heading for burnout.

Tracking these core numbers helps you spot bottlenecks. If you see a sudden drop in accuracy, for example, it’s a red flag that your guidelines might be confusing or that a moderator needs a bit more training.

Strategic Metrics That Show Business Impact

While operational KPIs are crucial for managing your team, strategic metrics are what connect your work to the bigger business goals. These are the numbers you bring to the leadership team to prove that a great social media content moderator is creating real, measurable value.

These KPIs tell you the true story of your community’s health:

- Ratio of Positive to Negative Comments: This is a fantastic indicator of the overall vibe in your community. A solid moderation strategy should cause this ratio to climb over time, which is hard evidence of a healthier, more positive space.

- User-Reported Content Volume: Seeing a drop in the number of comments your own audience flags is a huge win. It means your proactive moderation is catching problems before your community members have to. You're getting ahead of the curve.

- Engagement on Key Posts: Let's face it, healthy comment sections are more inviting. When people feel safe, they're more likely to jump in. Keep an eye on engagement rates (likes, shares, comments) on your most important posts to see if cleaner conversations are driving more interaction.

When you focus on these kinds of KPIs, you shift the entire conversation. You stop talking about "how many comments did we delete?" and start asking, "how much healthier is our community?" This data-first approach proves that good moderation isn’t just a defensive chore—it's a proactive strategy for building a much stronger and more engaged audience for your brand.

Navigating High Stakes in Modern Digital Spaces

https://www.youtube.com/embed/X3C-9EY2TCc

The job of a social media content moderator used to be pretty straightforward—mostly just managing comments. That world is long gone. Today, moderation is a critical part of corporate governance and the first line of defense in an online world where a small digital risk can mushroom into a full-blown business crisis overnight.

Think of moderators less as community managers and more as strategic risk managers. Their work is the invisible scaffolding that holds up a company's reputation, minimizes legal headaches, and protects the customer trust that every successful brand is built on. It only takes one misstep, one piece of viral misinformation, or one poorly handled PR flare-up to undo years of hard work.

This strategic role is amplified by the sheer speed and volume of online chatter. With over 5.31 billion people using social media globally, the connection between moderation and risk management has never been clearer. On these massive networks, things like misinformation and deepfakes can spread up to six times faster than the truth, creating a massive threat to a brand's integrity. For a deeper dive into this, Sprinklr offers some great insights on proactive brand protection.

From Viral Threats to Regulatory Hurdles

The dangers aren't just about a bruised reputation; they are increasingly legal and financial. Failing to properly moderate content can bring on serious consequences, making the work of a sharp, diligent social media content moderator absolutely essential.

Two major forces are constantly raising the stakes:

- Viral Misinformation: A false story about a product or a nasty rumor about an executive can catch fire in just a few hours, directly tanking stock prices and shattering consumer confidence.

- Evolving Regulations: New laws, like the EU’s Digital Services Act, are putting the responsibility squarely on platforms and brands to keep their online spaces clean, with massive fines waiting for those who don't comply.

Every decision a moderator makes is a tiny exercise in risk assessment. Hiding a defamatory comment, flagging a potential legal issue, or cooling down a heated debate—each action directly contributes to the company's health and stability.

Moderators as the First Line of Defense

At the end of the day, a moderator’s job is to spot and neutralize threats before they grow and spread. They are the eyes and ears on the digital front lines, noticing emerging problems like coordinated trolling on social media or the start of a negative trend long before it ever hits a C-suite dashboard.

This proactive defense is what separates a resilient brand from a vulnerable one. When a company invests in skilled moderation, it's doing more than just tidying up its comment sections. It’s building a vital defensive wall around its most valuable assets: its reputation, its customers, and its future.

Got Questions About Content Moderation? We Have Answers.

Jumping into the world of content moderation can feel a bit overwhelming, and it's natural to have questions. Let's tackle some of the most common ones we hear from brands, breaking down the key ideas to help you build a community that's both safe and buzzing with conversation.

What Really Makes a Great Social Media Content Moderator?

It’s about more than just knowing the rules. The best moderators have a unique blend of sharp critical thinking, a deep understanding of online culture, and some serious emotional resilience. Think about it: a top-notch social media content moderator needs to instantly tell the difference between friendly banter, biting sarcasm, and a genuine threat. That requires a real feel for the nuances of online communication.

They also need a sharp eye for detail to apply your brand's rules fairly and consistently every single time. But most importantly, this role demands resilience. Moderators are on the front lines, often dealing with a stream of harmful or upsetting content, and being able to manage that emotional toll is absolutely essential.

I'm a Small Business. How Can I Possibly Afford This?

You don't need a massive budget to get started. For small businesses, the key is to be smart and scalable. Begin by creating a crystal-clear set of community guidelines and then block out dedicated time each day to go through comments yourself. Don't forget to use the free, built-in tools that social platforms like Facebook and Instagram already provide, like setting up keyword filters to catch common problems automatically.

As your community gets bigger, a hybrid approach is your best bet for staying on budget. You can use an AI-powered service to do the heavy lifting—filtering out obvious spam and clear violations—which frees up your time to handle the trickier comments that truly need a human touch.

How Do I Create a Moderation Policy That Doesn't Stifle Conversation?

The trick is to zero in on harmful behavior, not different opinions. A strong, fair policy doesn't try to silence everyone; it draws a clear line in the sand against things like harassment, hate speech, spam, and sharing private information (doxxing). For each rule, give concrete examples so people know exactly what you mean.

Aim to build a "safe and constructive" space, not a "positive-vibes-only" echo chamber. Healthy debate and even criticism of your brand should be welcome. When you're transparent about your rules and enforce them fairly for everyone, you build trust. This encourages people to engage in real conversations while still keeping the trolls and bad actors out.

Ready to protect your brand and get your time back? FeedGuardians uses smart AI to hide spammy and harmful comments, reply to customers, and even spot sales leads in real-time. See how FeedGuardians can change the game for your social media.

Tired of manually moderating comments?

FeedGuardians automates spam filtering, responds to customers, and protects your brand — setup in 3 minutes.