Quick Summary

| Key Insight | What You Need to Know |

|---|---|

| Damaged Brand Reputation | A constant stream of negativity can seriously stain your brand’s image, making you look unprofessional or untrustworthy. |

| Loss of Customers | Imagine a potential customer visiting your page, only to be met with a hostile and chaotic comment section. They're likely to leave and never come back. |

| Drained Resources | Your team ends up wasting precious time and energy putting out fires instead of focusing on proactive strategies that actually grow the business. |

Trolling on social media is the deliberate act of posting inflammatory, offensive, or off-topic comments to provoke emotional reactions, disrupt conversations, and harass others. It’s a world away from simple negative feedback; this is malicious behavior specifically designed to poison online communities, tarnish brand reputations, and scare away real customers.

Why Trolling on Social Media Demands a Real Strategy

It's easy to write off trolling as just a part of being online—a few nasty comments you can just ignore or delete. But that mindset completely misses the real danger. Trolling isn't a minor annoyance; it’s a serious business risk that can contaminate your digital spaces and do lasting harm to your brand.

When you let trolling go unchecked, you're essentially allowing a toxic environment to take root. This negativity pushes away the genuine followers and customers who actually want to have meaningful conversations. Think of it like a community garden. If you let weeds grow wild, they’ll eventually choke out all the healthy plants you’ve worked so hard to cultivate. Trolls do the same thing to your online community, suffocating positive engagement and goodwill.

The True Cost of Inaction

Just ignoring trolls almost never works. Their entire goal is to get a reaction and derail normal conversation. Letting malicious comments sit there sends a clear signal to your audience that this kind of behavior is acceptable, which chips away at the trust and credibility you've built. The fallout from doing nothing can be significant.

- Damaged Brand Reputation: A constant stream of negativity can seriously stain your brand’s image, making you look unprofessional or untrustworthy.

- Loss of Customers: Imagine a potential customer visiting your page, only to be met with a hostile and chaotic comment section. They're likely to leave and never come back.

- Drained Resources: Your team ends up wasting precious time and energy putting out fires instead of focusing on proactive strategies that actually grow the business.

And this isn't a small problem—it's getting worse. By 2025, online trolling has hit an all-time high. Lifetime victimization—meaning someone has experienced online harassment at some point—has exploded from 33.6% in 2016 to 58.2%. That’s a staggering jump, showing that well over half of all internet users have been targeted. You can find more details in this analysis of cyberbullying trends.

A proactive, structured approach is no longer optional for any brand with an online presence. It's not about just deleting comments here and there. A real strategy combines clear policies, human judgment, and the right technology working together.

Distinguishing Trolls from Critics

One of the biggest challenges is telling the difference between a genuine customer with a problem and someone just trying to cause trouble. A legitimate critic wants a resolution; a troll wants chaos. This quick guide can help you spot the difference.

| Behavior | Legitimate Critic | Social Media Troll |

|---|---|---|

| Intent | Seeks a solution or wants to share a valid negative experience. | Aims to provoke, derail conversations, or get a reaction. |

| Language | Uses specific, fact-based complaints (even if angry). | Relies on insults, personal attacks, and inflammatory language. |

| Engagement | Is open to a dialogue and a reasonable resolution. | Ignores facts, repeats debunked claims, and refuses to engage constructively. |

| Profile | Usually has a real, established profile with a history of normal activity. | Often uses a new, anonymous, or fake-looking profile with little history. |

Knowing who you're dealing with is the first step. Responding to a troll the same way you would a frustrated customer will only add fuel to the fire.

From Reactive to Proactive Defense

Constantly reacting to trolls after they've already done damage is a losing battle—it’s like an endless game of whack-a-mole. A much better way is to build a structured defense ahead of time. This guide is your playbook for getting a handle on social media trolling, helping you shift from a defensive crouch to a position of confident control. We’ll break down how to spot different types of trolls, build solid moderation policies, and use technology to shield your community, setting the stage for a healthier and more profitable online presence.

A Field Guide to Different Types of Trolls

If you're going to manage trolling effectively, the first thing you need to accept is that not all trolls are created equal. They have different motivations, use different tactics, and demand different responses. A one-size-fits-all approach is like trying to use a sledgehammer to fix a watch—it's clumsy, ineffective, and usually makes things much worse.

Learning to spot the different troll archetypes is what separates reactive community managers from strategic ones. Think of this section as your field guide. Once you can identify the specific "species" of troll you’re dealing with, you can anticipate their behavior and deploy a much smarter counter-strategy.

And this skill has never been more critical. By 2025, the global social media user base has hit an incredible 5.66 billion identities, which is more than two-thirds of the planet's population. This sprawling digital world, with hundreds of millions of new accounts popping up every year, is the perfect breeding ground for trolling. You can dig deeper into the numbers with the global social media usage report from DataReportal.

The Classic Instigator

This is your garden-variety troll, the one most people picture. The classic instigator’s goal is pure and simple: they want to start a fire and watch it burn for their own entertainment. They’ll toss inflammatory, off-topic, or just plain wrong statements into a perfectly civil conversation to get a rise out of people.

These trolls live for the negative attention. They have zero interest in a real debate or finding a resolution; they get their kicks from the emotional reactions they can provoke. The moment you engage them, they’ve won.

- Signature Tactic: Dropping baiting questions like, "Does anyone actually believe this?" or posting deliberately false information, knowing someone will feel compelled to correct them.

- Best Response: This is where the old internet adage "do not feed the trolls" is your best friend. Ignore them. Hide their comments. Starve them of the attention they so desperately want.

The Concern Troll

The concern troll is a far more subtle and, frankly, more insidious character. They wrap their disruptive intent in a cloak of fake support and feigned worry. You'll recognize them by their opening lines: "As a longtime fan, I'm just concerned that..." or "I absolutely love what you do, but..."

Don’t be fooled. This concern is a Trojan horse. Their real mission is to plant seeds of doubt, chip away at your brand’s credibility, and poison the conversation from the inside. By posing as an ally, they give their critiques a veneer of legitimacy that can be incredibly damaging.

Concern trolls weaponize insincerity. By pretending to be on your side, they can manipulate genuine customers and hijack a positive discussion, sending it into a negative spiral. This makes them especially tricky to handle without accidentally alienating your real supporters.

The Organized Mob

Perhaps the most daunting threat is the organized mob. This isn't one person having a bad day; it's a coordinated group attack, often fueled by a specific agenda. These pile-ons can be driven by a network of real people or amplified by an army of automated bot accounts.

An organized mob can flood your channels with a huge volume of negative, repetitive, or abusive comments in a very short time. The goal is to shut down all other conversation, intimidate your community, and completely control the narrative. If you see this kind of coordinated storm, you know you're dealing with something bigger than a few disgruntled followers. It’s crucial to know what to look for, especially on platforms like Instagram—our guide on how to identify bots commenting on Instagram can help you tell the difference.

Building Your Brand’s Anti-Trolling Playbook

Trying to handle trolls one comment at a time is like playing whack-a-mole in an endless arcade. It's exhausting, demoralizing, and ultimately, a losing game. You can’t win by constantly reacting; you win by having a solid game plan before the chaos even starts. That’s where an anti-trolling playbook comes in. Think of it as your brand's official rulebook, action plan, and shield, all rolled into one.

A good playbook isn't just a list of things people can't do. It’s a public statement about your community's values. It tells everyone—your followers, your team, and the trolls—what you stand for and what lines can't be crossed. Without this foundation, your moderation feels random and biased. A strong playbook is a cornerstone of any serious online reputation management strategy.

Defining the Rules of Engagement

First things first: you need to spell out exactly what is and isn't acceptable in your community. Trolls thrive on ambiguity, so vague guidelines like "be nice" are basically an invitation for trouble. Get specific. Your policy needs to be crystal clear, leaving no room for "creative" interpretations.

Here are the non-negotiables to include in your rules:

- Hate Speech: Any language attacking people based on race, religion, gender, sexual orientation, disability, or other core aspects of their identity.

- Harassment and Personal Attacks: This covers everything from name-calling and direct threats to posting someone's private information (doxxing).

- Spam and Self-Promotion: Lay down the law on repetitive, off-topic posts and unsolicited ads. This keeps the conversation focused and valuable for everyone else.

- Misinformation: Take a firm stance on the deliberate spread of false information that could cause real-world harm.

When you’re this explicit, you empower your moderators. They aren't making subjective judgment calls anymore; they're simply enforcing the clear rules you’ve already set.

Establishing Clear Consequences

Once the rules are clear, the consequences for breaking them have to be just as clear. A transparent system of penalties shows you’re being fair and shuts down accusations of censorship. The best approach is a tiered system where the punishment fits the crime.

A simple three-strike system is a great place to start:

- First Offense: The comment gets deleted, and the user gets a public warning. Treat it as a teaching moment.

- Second Offense: The user gets a temporary timeout. A 24-hour or a 7-day mute from your page usually gets the message across.

- Third Offense: A permanent ban from all of your social communities. No more chances.

This tiered approach shows you’re willing to give people a chance to correct their behavior, but you have zero tolerance for repeat offenders. Of course, for serious violations like credible threats or blatant hate speech, you should feel empowered to skip straight to a permanent ban.

The stakes are incredibly high, especially for younger audiences. Consider that 58% of U.S. students aged 13 to 17 have experienced cyberbullying, and a staggering 73% of young women have received unwanted sexual content online. These aren't just numbers; they’re a powerful reminder that firm rules protect real people. You can read more about the widespread impact of cyberbullying on Panda Security.

Communicating Your Policy and Appeals Process

A playbook is completely useless if it’s a secret. You have to make sure your community guidelines are front and center. Pin them to the top of your Facebook group, create a dedicated Instagram Story highlight, or link to them directly in your bio on X (formerly Twitter). Make them impossible to miss.

Finally, offer a simple, straightforward way for people to appeal a decision. This isn’t about letting trolls off the hook; it’s about showing you’re committed to fairness. Something as simple as a dedicated email address for appeals builds trust and signals that there are thoughtful humans behind the screen, not just automated bots. It’s the final piece that turns your playbook from a punishment tool into a community-building one.

Creating a Smart Escalation Workflow That Works

Having a solid moderation policy is a great start, but it’s only half the battle. A policy is just a document until you build a clear, repeatable process for enforcing it—especially when your team is in the trenches during a coordinated troll attack. This is where a smart escalation workflow becomes your best friend.

Think of an escalation workflow as a road map for your moderation team. It creates a tiered response system, making sure every incident is handled consistently, quickly, and by the right person. This gets rid of the guesswork, helps prevent moderator burnout, and stops your brand from making rushed, emotional decisions in the heat of the moment.

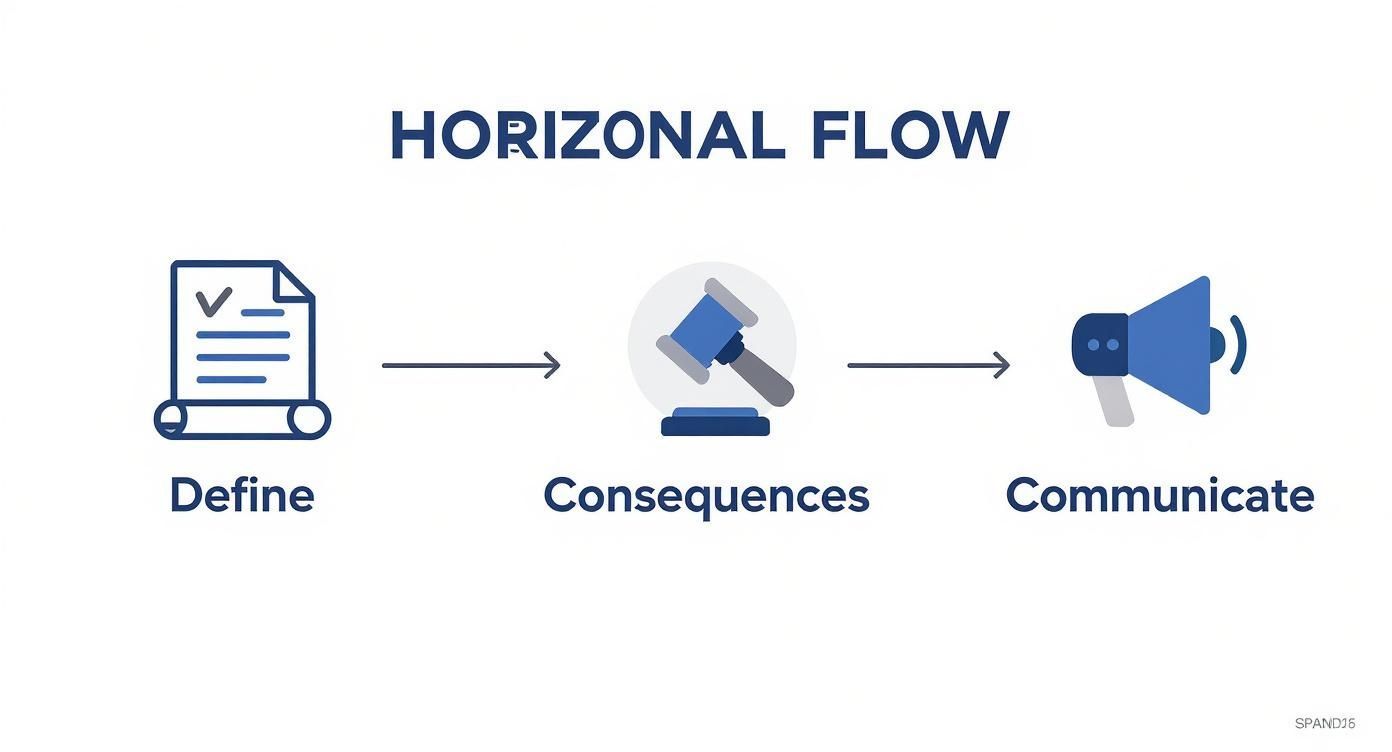

This visual guide breaks down the essential steps for building your anti-troll playbook, from setting the rules to communicating them clearly.

As you can see, a successful strategy hinges on defining clear rules, establishing real consequences, and making sure your community knows where the lines are drawn.

Level One: The Front Line

The first level of your workflow is where most of the action happens. This tier is managed by your frontline community managers and any AI-powered automation tools you have in place. Their job is to deal with clear-cut violations of your community guidelines, and to do it fast.

Think of Level 1 as your brand’s immune system. It’s designed to automatically filter out common, low-level threats before they have a chance to spread. These actions should be swift and require minimal debate.

- Who Handles It: Frontline moderators, community managers, and automated tools.

- Example Scenarios: Deleting obvious spam, hiding comments with profane keywords, and blocking accounts that are clearly bots.

- Standard Actions: Hiding or deleting the offending comment, issuing a pre-written warning, or applying a temporary mute for a first offense.

Level Two: Senior Review for Complex Cases

Not every situation is black and white. When a moderator comes across a comment that’s in a gray area, they need a clear path to escalate it for a second opinion. Level 2 is for these tricky cases that require more context and an experienced eye.

This tier is crucial because it keeps frontline moderators from having to make high-stakes decisions alone and ensures your policy is applied fairly. A classic Level 2 case could be a comment that tiptoes right up to the line of your hate speech rules, or maybe a complaint from a high-profile customer that needs a delicate touch.

An escalation to Level 2 isn't a sign of failure; it’s a sign that your system is working. It provides a safety valve for complex situations, protecting both your brand and your moderators from making critical errors.

Level Three: Crisis Response

The final tier is reserved for the most severe threats—the kind of incidents that pose a genuine risk to your business, your team, or your community. These situations are bigger than just comment moderation and need input from other departments across the company.

Level 3 incidents are rare, but they’re critical. Having a pre-defined protocol means you’re not scrambling to figure out who to call when a real crisis hits. This is your emergency response team.

- Who Handles It: Senior leadership, Legal, Public Relations (PR), and sometimes Security teams.

- Example Scenarios: Direct threats of violence, coordinated doxxing campaigns against employees, or a viral misinformation event that demands a public statement.

- Standard Actions: Documenting and preserving all evidence, notifying the social media platform, contacting law enforcement if necessary, and activating the company’s crisis communication plan.

To give you a clearer picture, here is what a tiered workflow might look like in practice.

Sample Moderation Escalation Workflow

| Threat Level | Example Behaviors | Initial Action | Escalation Path |

|---|---|---|---|

| Level 1: Low | Spam, profanity, off-topic comments | Automated: Hide/delete comment. Manual: Issue warning. | If user repeats behavior 3 times, escalate to Level 2. |

| Level 2: Medium | Insults, borderline harassment, misinformation | Moderator: Review context, remove comment, issue a 24-hour suspension. | If behavior is severe or part of a coordinated attack, escalate immediately to Level 3. |

| Level 3: High | Direct threats, hate speech, doxxing | Senior Moderator: Immediately ban user, document all evidence. | Immediately notify: Head of Community, Legal, and PR teams. Activate crisis plan. |

This table shows how a tiered system provides clear, predictable actions for your team, removing ambiguity when the pressure is on.

Building out this three-tiered workflow transforms your anti-trolling playbook from a theoretical document into a practical, actionable plan. It empowers your team to protect your community and your brand, no matter what gets thrown their way.

Using AI as Your First Line of Defense

Trying to moderate a busy comment section by hand is like trying to bail out a sinking boat with a teaspoon. It’s just not realistic. The sheer volume of comments, especially if you’re hit with a coordinated troll attack, makes it impossible for even the best human team to keep up.

This is where AI-powered automation becomes essential. It’s no longer a luxury; it’s a critical part of your defense.

Think of an AI moderation tool as your brand’s always-on digital security guard. It works 24/7, scanning every single comment for trouble the instant it’s posted. This isn't about replacing your team—it's about making them more effective. By automating that first pass, you free up your human moderators from the soul-crushing work of sifting through obvious spam and hate speech.

This lets your team apply their skills where they really matter: on the tricky, nuanced comments that demand human judgment. Was that a genuinely frustrated customer or a subtle concern troll? That’s where a person shines. This hybrid approach—letting technology handle the scale and people handle the strategy—is the new standard.

How AI Actually Spots and Deals With Trolls

Modern AI moderation tools are a lot smarter than a simple list of banned words. They use advanced technology to understand the intent behind the words, which is a much more powerful and accurate way to protect your community.

This intelligent filtering relies on a few key technologies working in concert:

- Natural Language Processing (NLP): This is the brains of the whole operation. NLP gives the AI the ability to understand context, sentiment, and nuance. It can pick up on sarcasm, spot passive-aggressive digs, and identify threats even when they don’t contain any obvious red-flag keywords.

- Keyword and Phrase Filtering: While NLP is great for nuance, you still need a hard-and-fast blocklist. Keyword filtering is the simple, direct way to instantly zap comments containing profanity, slurs, or specific terms you want to keep off your page.

- User History Analysis: A good AI tool doesn’t just look at a comment in isolation; it looks at the person who posted it. It can track user behavior to identify repeat offenders, flag accounts that only post low-quality comments, and even recognize patterns that suggest one person is behind multiple troll accounts.

Putting AI to Work in the Real World

The real magic of AI is its ability to take immediate, consistent action based on the moderation rules you’ve established. This turns your policy from a document sitting in a folder into a living, automated defense system.

For example, you can set up an AI tool to do things like:

- Automatically Hide Abusive Comments: Anything containing clear hate speech or violent threats can be made invisible to your audience in milliseconds.

- Flag "Gray Area" Content for Review: If a comment is borderline—maybe it’s sarcastic, or maybe it’s just rude—the AI can set it aside in a queue for a human moderator to make the final call.

- Spot and Report Bot Attacks: By analyzing how fast accounts are posting and how similar their comments are, AI can identify coordinated bot activity, helping you shut it down quickly.

By automatically handling the flood of clear-cut violations, AI effectively clears the noise. This gives your human team the breathing room to make thoughtful, strategic decisions on the tough cases, which goes a long way in preventing moderator burnout.

The technology is always improving, too. Exploring the different AI comment moderation tools available can show you what’s possible, from real-time spam detection to sentiment analysis that’s sharp enough to identify purchase intent. The right tool acts as a force multiplier, allowing your team to build a safer and more positive community without needing to hire an army of moderators.

Alright, you’ve put a strategy in place to fight back against social media trolls. That’s a massive step. But how can you be sure it's actually making a difference? To prove your efforts are paying off, you need to look past surface-level numbers like follower counts and dig into the metrics that truly show how healthy your online community is.

Let's be realistic: success isn't about creating a world with zero trolls. It's about building a safer, more welcoming, and more engaging space for your actual customers. By tracking the right data, you can see exactly what’s working, tweak your approach, and show the real business value of smart moderation.

Core Quantitative Metrics to Track

The clearest way to see if your plan is working is to track specific, hard numbers. This data gives you a straightforward "before and after" snapshot of your strategy's impact.

Here are the essential metrics to start with:

- Reduction in Toxic Comments: This is your North Star. Keep a close eye on the percentage of comments you have to hide, flag, or delete because they violate your rules. A steady drop in this number is the most obvious sign that your playbook and tools are doing their job.

- Moderation Response Time: How fast are you (or your tools) getting to harmful content? The quicker you can act, the less time trolls have to derail conversations and sour the mood. A lower average response time is a huge win.

- Increase in Positive User Engagement: When you clear out the negativity, you create space for genuine interaction to flourish. You should see a natural rise in positive metrics like likes, shares, and—most importantly—the number of thoughtful, on-topic comments from your real audience.

These numbers will be the backbone of your reporting, drawing a direct line between your moderation efforts and the health of your community.

Measuring Sentiment and Community Health

While the numbers tell a big part of the story, you can't ignore the overall "vibe." Qualitative feedback helps you understand how your audience feels about the changes. It adds the human element to your data.

Measuring success is about proving you’ve made your corner of the internet a better place. It’s about showing that by reducing negativity, you’ve increased positive engagement and strengthened customer trust, which directly benefits the business.

A simple community survey can work wonders here. Just ask your followers directly: Do they feel the comment sections are more positive now? Do they feel safer engaging with your posts?

Another fantastic metric is your community sentiment score. This is all about analyzing the emotional tone of the conversations happening on your page. AI-powered tools like FeedGuardians can automatically scan comments and give you a real-time read on whether the overall mood is shifting from negative to positive. Combining direct feedback with sentiment analysis gives you the complete picture of your anti-trolling success.

Answering Your Toughest Trolling Questions

Even with a great strategy and the right tools, you'll inevitably run into some tricky situations when dealing with trolls. Let's tackle some of the most common questions community managers grapple with, so you can handle those gray areas like a pro.

Should I Engage With or Just Ignore a Troll?

The age-old advice is "don't feed the trolls," and honestly, it’s solid. Most of the time, jumping into the fray just gives a disruptive person the attention they’re looking for. It pulls your brand into a messy, pointless back-and-forth. Ignoring them—and hiding or deleting their comments—is like cutting off their oxygen supply.

But there is one rare exception. If a troll is spreading specific misinformation that could actually fool your audience, it's worth stepping in. Make a single, calm, fact-based correction. Post the right info, and then walk away. Hide any follow-up replies from the troll and don't get pulled back in.

What if I Accidentally Block a Real Customer?

That’s a totally valid fear, and it’s exactly why you need a clear appeals process. Your community guidelines should be easy to find and include a straightforward way for people to reach out if they think they've been moderated by mistake. A dedicated email address works perfectly.

This approach shows you're transparent and acting in good faith. If a real customer gets caught in the crossfire, a quick, sincere apology and a reversal can turn a bad situation around. It can even make them feel more loyal to your brand.

Can My Brand Get in Legal Trouble for Deleting Comments?

For the most part, no. Think of your brand's social media page as your digital property. You get to set the house rules. As long as you're removing content that breaks your own published community guidelines, you're well within your rights. This isn't a First Amendment issue, which protects people from government censorship, not from a private company moderating its own space.

The real secret to staying out of hot water is consistency. Apply your rules fairly to everyone, every time, without targeting specific viewpoints. Documenting your policies and the actions you take is your best line of defense.

Protecting your community requires a proactive and consistent game plan. FeedGuardians uses AI to automatically hide harmful comments, spam, and hate speech in real-time, freeing up your team to focus on building relationships with your actual customers. See how our AI-powered comment management can safeguard your brand.

Tired of manually moderating comments?

FeedGuardians automates spam filtering, responds to customers, and protects your brand — setup in 3 minutes.