Quick Summary

| Key Insight | What You Need to Know |

|---|---|

| Prevents Brand Damage | It catches harmful content before it can stain your public image or tie your brand to negativity. |

| Encourages Positive Conversations | By weeding out the trolls and toxicity, it clears the way for genuine discussions and valuable customer feedback. |

| Lightens the Moderation Load | It automates the grunt work of removing obvious rule-breakers, freeing up your team to focus on building a great community. |

At its core, a bad language filter is a tool that automatically finds and gets rid of profanity, hate speech, and other toxic stuff from user-generated content. Think of it as a smart, digital bouncer for your online spaces, keeping the peace on social media, forums, and in-game chats.

Why a Bad Language Filter Is Your First Line of Defense

Picture this: you've just launched a fantastic ad campaign, and the comments are rolling in. But mixed in with all the praise are toxic comments, spam, and outright abuse. This isn't just a small problem; it's a real threat that can poison your community, wreck your brand’s reputation, and scare off new customers. An unmoderated comment section can become a serious liability, fast.

A modern bad language filter is so much more than a simple list of banned words. It's a cornerstone of any solid digital safety and online reputation management strategy. Old-school filters were easy to fool with clever misspellings or just couldn't grasp context. Today's systems are far more intelligent, using artificial intelligence to understand the nuances of conversation.

Protecting Your Brand's Reputation and Community Health

A safe online space isn't just a nice-to-have; it's essential for growing your business. When people feel safe, they're more willing to participate, make a purchase, and become loyal fans. Here’s how a good filter makes that happen:

- Prevents Brand Damage: It catches harmful content before it can stain your public image or tie your brand to negativity.

- Encourages Positive Conversations: By weeding out the trolls and toxicity, it clears the way for genuine discussions and valuable customer feedback.

- Lightens the Moderation Load: It automates the grunt work of removing obvious rule-breakers, freeing up your team to focus on building a great community.

The demand for this kind of protection is skyrocketing. Experts predict a 30-50% jump in the adoption of profanity filtering across platforms by 2026, largely because of the rise in toxic online behavior. In fact, recent surveys found that over 60% of users run into offensive language online every single week.

A healthy digital community is built on trust, engagement, and safety. Failing to moderate your online spaces is like leaving the front door of your business unlocked—it invites trouble and undermines the security your customers expect. Protecting this space is non-negotiable for sustainable success.

From Simple Blocklists to Smart AI: How Bad Language Filters Grew Up

The journey of the modern bad language filter is a perfect example of technology evolving from a blunt instrument into a precision tool. The earliest attempts at cleaning up online conversations were pretty basic, relying on what we call a static keyword list—or, more simply, a "blacklist."

Think of it like a nightclub bouncer with a very short, very specific list of troublemakers. If your name is on the list, you're not getting in. No questions asked. Likewise, if a comment contained a word from this digital blacklist, it was blocked or deleted. It worked for the most obvious stuff, but people quickly found ways around it.

Getting Smarter Than a Simple Word Match

The cracks in the keyword list approach showed up almost immediately. Users got creative, using intentional misspellings (like "sh!t"), adding spaces between letters, or swapping in special characters. This forced the technology to get a bit more clever, leading to the use of Regular Expressions, or Regex.

Regex is like giving that bouncer a new rulebook. Instead of just looking for the exact name "John Smith," the bouncer can now spot patterns—anyone whose name starts with a "J" and ends with "th." In the world of moderation, a Regex rule could catch "f*ck," "f@ck," and "fuuuck" all at once. It was a definite improvement.

But here’s the problem: both keyword lists and Regex are completely clueless about context. They can't tell the difference between "This burger is the shit!" (a huge compliment) and a comment that's actually aggressive. This leads to a double failure—it frustrates good users and still misses genuinely toxic behavior.

As the diagram below shows, effective moderation is the bedrock of a healthy online community. It's what makes safety, trust, and real engagement possible.

Without that foundation of safety, you can't build the trust needed for people to truly connect and engage with your brand or each other.

To really understand this journey, it helps to see the different methods side-by-side.

Evolution of Bad Language Filter Technology

| Filtering Method | How It Works | Strengths | Weaknesses |

|---|---|---|---|

| Keyword Blacklists | Blocks comments containing exact words from a predefined list. | Simple to implement, fast, and catches the most common slurs and profanity. | Easily bypassed with misspellings or symbols. Lacks any context. High rate of false positives. |

| Regular Expressions (Regex) | Uses pattern matching to identify variations of banned words. | More flexible than simple lists. Catches common evasion tactics. | Still lacks context. Can become incredibly complex to write and maintain. Can slow down performance. |

| Machine Learning & NLP | AI models analyze the entire comment for context, sentiment, and intent. | Understands nuance, sarcasm, and slang. Differentiates between toxic and positive use of the same word. | Requires large datasets for training. More computationally intensive. Can have biases if not trained properly. |

This table really highlights the leap from simple pattern matching to genuine comprehension. Modern systems don't just see words; they understand meaning.

AI and Natural Language Processing Changed Everything

The real breakthrough came with Artificial Intelligence (AI), especially a field called Natural Language Processing (NLP). The move away from simple word lists to AI-driven analysis has been a total game-changer, with tools like the AI text classifier becoming essential for catching nuanced toxicity.

Think of an NLP-powered filter as a seasoned security chief, not just a bouncer at the door. This chief doesn't just check IDs; they listen to the tone of conversations, understand sarcasm, and figure out the intent behind what someone is saying.

- Contextual Analysis: The AI looks at the whole sentence or comment, not just one "bad" word, to grasp the real meaning.

- Intent Detection: It can tell the difference between a sarcastic joke, an upset customer who needs help, and a troll trying to start a fight.

- Sentiment Analysis: The system reads the emotional tone—is the comment positive, negative, or neutral?

By weaving these abilities together, an AI-powered bad language filter can make incredibly smart calls. It knows "sucks" in "this new vacuum really sucks up dirt" is a good thing, while "your customer service sucks" is a problem that needs attention. For any brand serious about protecting its community without accidentally silencing its biggest fans, this level of understanding is non-negotiable.

If you're curious about the different options out there, we've put together a comprehensive look at the leading AI comment moderation tools.

The Hidden Costs of an Outdated Profanity Filter

Here’s a hard truth: an outdated profanity filter can be just as damaging as having no filter at all. While the intention is good—to create a safe online space—a rigid, context-blind system often ends up causing more problems than it solves. These older tools run on a simple "if this, then that" logic, and that’s where the trouble starts. This oversimplified approach creates two major business risks that quietly chip away at your brand’s trust and community health.

The first big problem is the false positive. This is when your filter mistakenly blocks a perfectly innocent comment. Picture a customer excitedly posting, "This new feature is the absolute bomb!" only to see it vanish because "bomb" is on some ancient keyword list. What happens next? The customer feels unfairly censored, their positive feedback is lost, and they’ll think twice before engaging with you again.

Just as dangerous is the flip side: the false negative. This is when genuinely toxic content slips right through because it uses clever misspellings, evolving slang, or emojis the filter doesn’t recognize. This failure lets harmful language poison your comment sections, driving away your real community members and tarnishing your brand’s reputation by association.

When Good Intentions Go Wrong

At the heart of it, the issue is a complete lack of contextual understanding. A word that’s harmless in one culture can be deeply offensive in another, and slang changes faster than any manual list can keep up. An outdated bad language filter can't tell the difference between an aggressive insult and a regional turn of phrase, which leads to frustrating and alienating experiences for your audience. It's clear that a better approach is needed.

A rigid profanity filter doesn't just block words; it blocks communication. By failing to understand intent and context, it stifles valuable customer feedback, silences brand advocates, and creates a sanitized but lifeless community where genuine conversation cannot thrive.

This isn’t just a theoretical problem. A recent survey found that a staggering 75% of public universities were using secret profanity blacklists that resulted in over-the-top censorship. For example, some institutions banned words like "chicken" or the names of controversial local statues. They were shutting down legitimate conversations simply because a blunt filter couldn’t grasp the context.

The Real Cost to Your Brand

When you add it all up, the hidden costs of a poor bad language filter mount quickly. Each false positive represents a potentially lost customer or a negative review waiting to be written. Every false negative that lets spam or abuse through—like the kind from automated bots—pushes your real audience further away. You can learn more about how to spot and manage the problem of bots commenting on Instagram in our detailed guide.

Protecting your brand requires a system that’s as dynamic and nuanced as human language itself. It has to be smart enough to adapt to cultural shifts, understand intent, and make decisions that cultivate a genuinely safe and engaging online space.

How to Build a Smarter Content Moderation Strategy

Let’s be honest, the old ways of filtering content just don't cut it anymore. A truly effective moderation strategy isn't about blocking more words; it's about building a smarter system. We need something that gets nuance, respects context, and actually fits your brand's unique community standards.

It’s time to move away from rigid, one-size-fits-all blocklists and embrace a more dynamic approach built on customization and smart workflows.

The cornerstone of any modern strategy is customization. Every brand has a different voice and a different tolerance for what's considered "edgy." Think about it: a gaming community’s chat is going to have a completely different vibe than a financial services forum. A smart bad language filter lets you define the rules of engagement for your audience, rather than being stuck with a generic, pre-canned list of no-no words.

Establishing Smart Escalation Rules

A big part of a custom-fit strategy is creating intelligent escalation rules. Not every flagged comment is a five-alarm fire, and your response shouldn't be either. An advanced system knows how to automate the easy calls, freeing up your human moderators to tackle the genuinely tricky situations.

This naturally creates a tiered response system:

- Level 1 Automation: The system instantly handles clear-cut violations. Think hate speech, spammy links, or severe profanity. These comments are hidden or deleted automatically, no human touch needed.

- Level 2 Flagging: The gray areas—sarcasm, complex complaints, or culturally specific slang—get flagged and sent to a human moderator. This "human-in-the-loop" model ensures that content needing a careful eye gets one.

This hybrid approach is a game-changer for your team's workload. Instead of drowning in a sea of comments, your moderators can focus their expertise where it truly counts: on the nuanced content that the AI was smart enough to escalate.

The Power of Pairing Sentiment with Intent

Now, here's where things get really interesting. The magic happens when you combine sentiment analysis (the emotional tone of the comment) with intent detection (what the person is trying to accomplish). This powerful duo turns a simple bad language filter into a genuine business intelligence tool. It’s the difference between just hearing noise and actually understanding the conversation.

Take a comment like this: "Where the heck is my order!? This is taking forever!"

An old-school filter might just see "heck" and flag it. A much more sophisticated system, however, decodes what's really going on.

Sentiment Analysis: Detects strong negative emotion (frustration, anger). Intent Detection: Identifies a clear customer service issue (a problem with a purchase or delivery).

Instead of just hiding the comment, the system can automatically route it straight to your customer support queue. This move transforms a public complaint into a golden opportunity to show off your great service and solve a customer's problem. You're not just moderating a comment; you're actively saving a customer relationship. For a deeper look at the tactics involved, check out our complete guide on social media content moderation.

As your business grows, applying these strategies across different languages is crucial. To see how this works in the real world, this multilingual support case study offers some great insights. By building a smarter strategy, you do more than just protect your brand—you start uncovering valuable insights hidden in plain sight.

Measuring the ROI of Your Moderation Efforts

So, how do you actually prove that a clean, well-moderated comment section is worth the investment? It's one thing to feel good about positive interactions, but justifying the cost of a bad language filter means tying your moderation work directly to business outcomes.

It's time to look past simple vanity metrics like the raw number of comments. Instead, we need to focus on key performance indicators (KPIs) that tell a story of real, tangible impact.

Think of it this way: a smart moderation strategy isn't a cost center. It's an engine for brand growth, customer loyalty, and reputation management. The right metrics will prove it.

Key Metrics That Reveal Business Impact

To build a strong business case, you have to connect the dots between your moderation efforts and the bottom line. The best place to start is by tracking a few critical KPIs that shed light on your system's efficiency, accuracy, and the overall customer experience.

Here are the essential metrics every brand should be watching:

Comment Intervention Rate: What percentage of your total comments actually needs a moderator's touch (hiding, deleting, or flagging)? A high rate is a red flag for widespread toxicity or spam, highlighting the clear need for a filter.

False Positive Rate: How often does your filter mistakenly hide a perfectly legitimate comment? Keeping this number low is absolutely crucial. It shows your system is intelligent enough to avoid silencing happy customers or burying valuable feedback.

Time to Resolution: When a comment is really a customer service cry for help (like, "my order is late!"), how fast does your team jump in? Slashing this response time is a direct path to better customer satisfaction and stops public complaints from snowballing.

By tracking these specific data points, you transform the abstract concept of "community safety" into a measurable business function. This framework allows you to show that an effective bad language filter isn't just about deleting profanity—it's about protecting engagement, improving service, and driving conversions.

Connecting Moderation to Your Bottom Line

Once you have this data, you can draw a straight line from your moderation work to your company's biggest goals. For instance, if you can show a steadily decreasing intervention rate, you're proving that you're building a healthier, more welcoming community. That almost always leads to higher user engagement and people spending more time on your site.

In the same way, a lightning-fast Time to Resolution on customer complaints discovered in your comments is a powerful testament to your brand's commitment to service. You can turn frustrated users into your most vocal advocates.

When you pair these moderation metrics with deeper insights from other tools, the picture becomes even clearer. We explore how to understand customer feelings with social media sentiment analysis tools in another guide, which can add a whole new layer to your reporting. This data-first approach makes it undeniable: intelligent moderation is a non-negotiable asset for any brand serious about sustainable growth.

Your Bad Language Filter Implementation Checklist

Bringing a great moderation strategy to life isn't about flipping a switch; it requires a clear, actionable plan. Think of this checklist as your step-by-step guide for moving from theory to a practical, effective system that works from day one.

The whole process starts with a hard look at your current digital turf. You can't fix a problem until you know exactly where it lives, so a thorough audit is the essential first step to pinpointing where toxicity is taking root and which of your channels need help the most.

Phase 1: Foundational Setup

This first phase is all about laying the groundwork. It’s where you define your brand's standards and pick the right tools for the job. Without clear guidelines, even the smartest technology is just flying blind.

Audit Your Channels: Get your hands dirty. Dive into your social media comments, forum posts, and user reviews to find the toxicity hotspots. Figure out which platforms and what types of content are attracting the most harmful language.

Define Community Guidelines: Write down the rules of the road for your community. This document should be clear, concise, and easy for anyone to find. It sets the standard for user behavior and becomes the playbook your filter will follow.

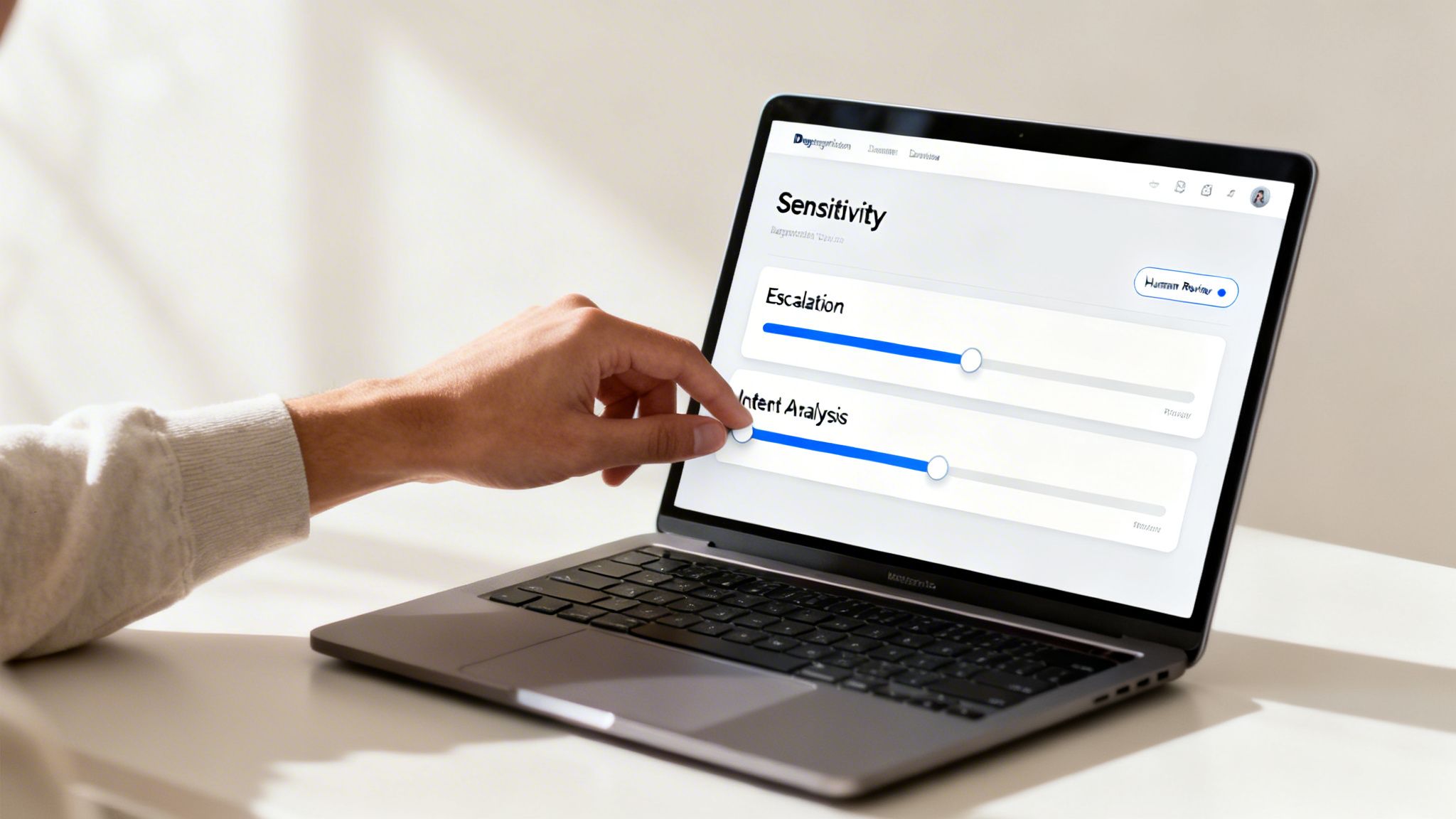

Choose a Customizable Tool: Don't settle for a generic filter. You need a tool that allows for deep customization. Look for features like adjustable sensitivity levels, the ability to create your own "naughty words" lists, and, most importantly, the smarts to tell the difference between sentiment and actual intent. A great tool like FeedGuardians gives you this level of control.

A successful moderation strategy isn't just about deleting negative comments. It's about actively cultivating a specific community culture. Your filter's rules should be a direct reflection of the brand voice and values you want to champion.

Phase 2: Configuration and Integration

With a solid foundation in place, it’s time to dial in your system and plug it into your daily workflows. This is where your strategy stops being a document and starts being a real, working part of your team.

Configure Your Rulesets: One size never fits all. Create different moderation rules for different places. For instance, you’ll probably want a much stricter filter on comments under your paid ads than you would for a private, members-only community group.

Establish Escalation Workflows: Design a smart process where the AI handles the low-hanging fruit—the obvious, clear-cut violations—but automatically flags the tricky, borderline comments for a human to review. This keeps your moderation accurate and saves your team a ton of time.

Integrate with Support Tools: Connect your filter directly to your customer support software, like Zendesk or Freshdesk. This is a game-changer. It means comments identified as urgent customer service issues can be instantly turned into support tickets and routed to the right team for a quick resolution.

Finally, you need to build a feedback loop. Your work isn't done after setup. Regularly check your filter's performance, review the content it flags, and tweak your rules over time. Your community will change, and your moderation strategy has to be smart enough to change with it.

To make this even more practical, here’s a condensed checklist to guide your implementation.

Bad Language Filter Implementation Checklist

A step-by-step guide for brands to set up and optimize their social media moderation strategy.

| Step | Action Item | Key Consideration |

|---|---|---|

| 1. Audit & Analyze | Review all public-facing channels for current toxicity levels. | Identify which platforms and content types are most problematic to prioritize your efforts. |

| 2. Define Standards | Draft and publish clear, accessible community guidelines. | Ensure the guidelines reflect your brand's voice and values. What culture do you want to build? |

| 3. Select Technology | Choose a filter with deep customization (e.g., custom lists, sensitivity). | Does the tool differentiate between sentiment, context, and intent? Avoid rigid, one-size-fits-all solutions. |

| 4. Configure Rules | Set up specific rulesets for different channels (e.g., ads vs. organic posts). | Start with a balanced approach—not too strict, not too lenient—and plan to adjust based on real-world results. |

| 5. Build Workflows | Create an escalation path for AI-flagged comments needing human review. | Who on your team gets the alert? What's the protocol for handling borderline cases? |

| 6. Integrate Systems | Connect the filter to your CRM or customer support platform. | How will you turn negative feedback or service complaints into actionable support tickets? |

| 7. Train Your Team | Educate your moderation and support teams on the new tool and workflows. | Ensure everyone understands the guidelines and their role in the escalation process. |

| 8. Monitor & Refine | Schedule regular reviews of the filter's performance and accuracy. | Use metrics like false positive/negative rates to continuously fine-tune your rules for better results. |

Following these steps will help you build a moderation system that not only protects your brand but also fosters a healthier, more engaging online community.

Frequently Asked Questions

It's completely normal to have questions when you're looking into content moderation. Let's walk through some of the most common ones we hear from business owners and social media managers who are considering a bad language filter.

Will a Bad Language Filter Hurt My Social Media Engagement?

Actually, it’s the other way around. A smart, modern filter is one of the best ways to increase positive engagement. Think of it as cleaning up the space so the real conversations can happen.

When you get rid of the toxic, hateful, or spammy comments, you’re creating a more welcoming environment for your actual customers. This gives the voices that matter most—your fans and advocates—the confidence to speak up, which builds stronger community trust and leads to more valuable discussions about your brand. It’s a classic case of quality over quantity.

Can an AI Filter Understand Sarcasm or Banter?

This is a great question, and it really highlights the difference between old-school filters and modern AI. A basic keyword-based filter? It gets confused easily. But a truly advanced AI solution is much more sophisticated.

A smart system doesn't just see a single word; it looks at the entire conversation, the user's history, and the overall sentiment.

By seeing the bigger picture, an intelligent filter can tell the difference between a bit of friendly banter between community members and a genuinely malicious insult. That kind of nuance is essential for making sure you don't accidentally block positive, human interactions.

How Much Manual Work Does an AI Filter Require?

An intelligent bad language filter is built to take work off your plate, not add more to it. Today's best systems can accurately automate decisions on over 80% of the obvious stuff, like spam links or severe profanity.

This frees up your team from the tedious job of comment-by-comment policing and shifts their focus to high-level strategy. They can spend their time on the complex, borderline cases the AI flags for review and on fine-tuning the filter’s rules. The goal is simple: let the tech handle the noise so your team can focus on building your community.

Ready to protect your brand and foster a healthier online community? FeedGuardians uses advanced AI to automatically hide harmful comments, detect customer needs, and keep your social media safe and profitable. Learn how FeedGuardians can transform your content moderation strategy today.

Tired of manually moderating comments?

FeedGuardians automates spam filtering, responds to customers, and protects your brand — setup in 3 minutes.